When deploying a database monitoring tool, one of the first things you need to do is to determine the size of your deployment. So, where do you start?

In a previous blog post I described the various aspects that can have an impact on the capacity requirements needed for a database monitoring solution. These aspects have a high variance and in many cases are not known in advance.

Identifying the required capacity per database is a difficult task and trying to calculate it by estimating the different aspects may lead to an error a few orders of magnitude. In this post, we’ll walk through practical steps you can use to more accurately capacity plan for your database monitoring solution by looking across all the databases in your deployment.

Avoid the Traps

First, let’s look at a few things to avoid. While it might seem like traffic to an application would be a good place to start, server horsepower does not always correlate to the actual traffic to and from an application. To illustrate, let’s consider two different types of applications that run on the same server:

- A weather-prediction application may be CPU intensive due to complex prediction algorithms which it periodically runs, while the inputs for those algorithms are very small. Monitoring such an application would show very little traffic.

- A CRM application’s main purpose may be getting reports and querying large amounts of information for customer analysis. Monitoring such an application would show very high traffic.

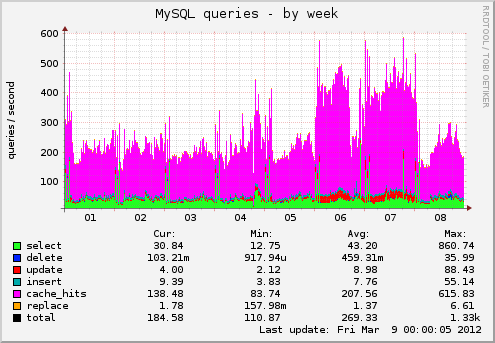

Even within the same application, traffic usually varies over time and can be erratic (Figure 1).

Figure 1: Example of database activity

Ideally, you would measure the actual traffic per database over time and use that to build your estimate. However, in most cases that is not a feasible approach in terms of time and resources given that most organizations often have a large number of servers to measure, and in order to create an accurate estimate, the traffic would need to be captured for at least a full working week, if not more, and then analyzed.

Guidelines for Estimation

With those things in mind, let’s look at how you can create a capacity estimate for your database monitoring tool. Two main factors go into creating an estimate:

- Database technology – different technologies are optimized for different types of applications. For example, the weather-prediction application may use a big data solution while the CRM application may use a traditional RDBMS.

- Number of CPU cores – despite the high variance in application CPU usage, the number of cores of a database server is the best data point for estimating the amount of traffic a database produces since usually there’s a direct correlation between number of CPU cores to the amount of traffic

Plan across all databases, not by individual database

An estimate developed with only a small number of databases will lack accuracy. For instance, trying to estimate the resources required to monitor a single Oracle server with 8 CPU cores or anticipate its expected traffic will be like trying to estimate the weight of a single sheet of paper; the weight could vary from less than 0.1 ounce to more than 5 ounces depending on material and size. For database monitoring the variance can be several orders of magnitude.

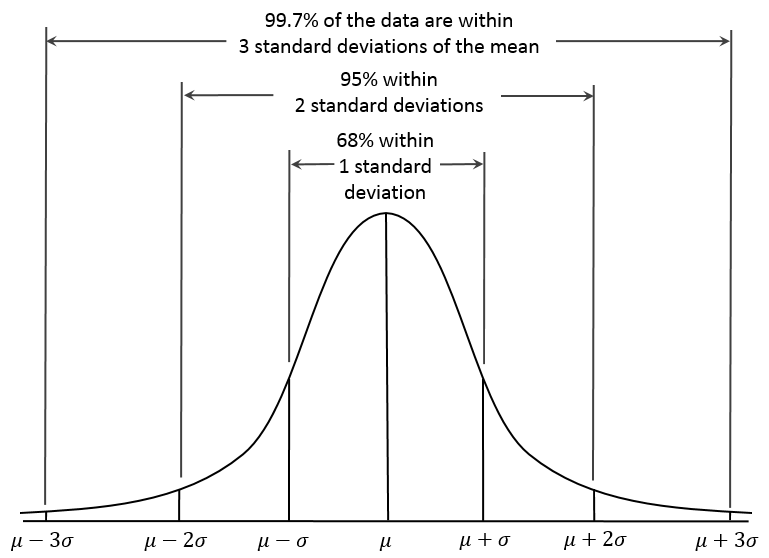

Based on the law of large numbers, as the number of databases increases, so does the accuracy of the estimate. In a normal distribution, most samples will be fairly close to the average (as measured by the standard deviation), with some extreme outliers, either very low or very high (see Figure 2).

Figure 2: Normal distribution of a load of databases with the same number of cores

Let’s look at an example. Assume you have a total of 10 databases in your deployment (and that 10 is a large number). The calculation for the entire database deployment will be much more accurate than the calculation for a single database. Figure 3 compares an estimated database monitoring capacity with the actual capacity—you can see the sum of all databases is close to the estimate, but specific databases (e.g. Database3) consumed three times more capacity then estimated:

Figure 3: Example of an estate with 10 databases, capacity estimate versus reality. (Y axis numbers for reference only; in this example they can represent Imperva Performance Units or IPUs.)

Capacity Planning for the Imperva Database Activity Monitoring Solution

At the core of Imperva’s Database Activity Monitoring tool are SecureSphere gateways which monitor traffic of database servers. For capacity resource planning purposes, you need to determine how many gateways are required for monitoring all your database activity.

SecureSphere gateway models differ in their monitoring capacity, which is expressed in “Imperva Performance Units” or IPU. One IPU can handle database traffic generated by a database server with X CPU cores.

To illustrate how one would estimate the number of SecureSphere gateways needed for a deployment, here’s a sample site with 100 RDBMS databases (a mix of Oracle, MSSQL, etc.):

- Each database has an average of four (4) cores.

- This equates to 400 total cores (100 databases x 4 cores each), with each core contributing an average of 100 IPU (SecureSphere requires 100 IPU on average per RDBMS core).

- This would put the sizing estimate at 40,000 IPUs (400 cores x 100 IPU) for this deployment.

- Using the SecureSphere gateway appliance x6510, three (3) gateways would be required for this deployment (plus one additional for redundancy in a cluster of gateways).

Keep in mind, this is a simplified example. It explains the basic calculations involved in capacity sizing and gives you a good-enough base to start from. But in a real-life scenario, there are multiple technologies, large variances of cores per database servers, different geo-locations, redundancy schemes, and other factors as mentioned above which can significantly impact the sizing estimate. Imperva experts work with our customers to evaluate these factors to develop a more customized sizing estimate.

What’s Next?

With an estimate in hand of the number of SecureSphere gateways required for monitoring all your databases, next comes planning for actual deployment and management of the solution.

Common challenges in this phase include:

- Initial deployment

- How do you know which databases to assign to which gateway to avoid overloading a specific one?

- Capacity management over time

- How do you validate that your gateways are not overloaded?

- How do you optimize gateway utilization over time?

- How can you easily manage your gateways’ capacity over time?

The Imperva Database Activity Monitoring tool offers a range of functionality to handle these issues, some of which include:

- Clustering of SecureSphere gateways, which allows:

- Automatic assignment of new agents to least loaded gateway

- Automatic load balancing feature

- Manual load balancing capabilities

- Real time measurement of each agents’ impact on a gateway

Learn about the full range of functionality available in Imperva data protection and compliance solutions.

Try Imperva for Free

Protect your business for 30 days on Imperva.